All things being equal

By Ben Smith

Published 14 Mar 2017

An A in Physics should have the same meaning as an A in English Literature that's the purpose of a common grading system. In practical terms, we recognise this in university offers that are based on grades not subjects, i.e. 'three grade Bs'. However, there is a debate around whether certain subjects are easier than others, and therefore whether the grades in each are of equal value.

Our exam system is set up to award grades that can be compared across subjects, and over different years. In UK assessment circles, this is known as 'inter-subject comparability'. We can investigate inter-subject comparability via 'subject pairs analysis'. As the name suggests, this involves selecting two subjects, identifying a group of students who take both, and comparing the average grade this group achieved in each. We assume that since we are looking at the same people in each subject, differences in their average grade will reflect differences in the demand of the subjects. As an example, a higher average grade in Physics than Biology might imply that Physics is easier to get an A in than Biology at least for these particular students.

But there are a number of problems with the subject pairs method. When comparing small or disparate subjects, there are often only a small number of candidates who sit both, so our analysis lacks statistical power. There are also concerns about bias the students who sit both Drama and Further Mathematics are not likely to be representative of the candidates who sit only one of the two, as an extreme example!

I recently tried out an alternative to subject pairs analysis that avoids some of these issues: propensity score matching (PSM). This method is similar to subject pairs in that it matches students taking one subject to those taking a second, but it does this based on them having similar characteristics rather than them being precisely the same person. You can define what 'similar' means by choosing the variables to match candidates on. Based on these, you compute the titular 'propensity score' for all candidates in the first subject, and pair each up with the candidate with the closest propensity score in the second subject. This allows a far higher match rate than subject pairs.

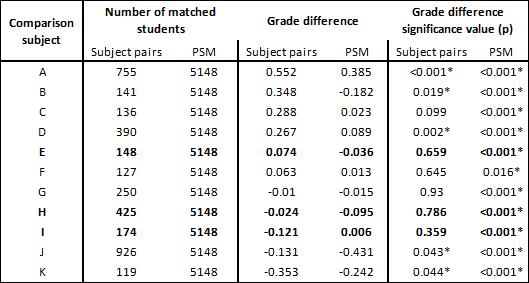

The table below outlines the results of a set of PSMs and subject pairs analysis comparing the standard of a single A-level subject (subject X) to several other A-levels (subjects A-I), each in turn. As you can see, far more data is included in the PSMs (the whole cohort sitting subject X was matched), and the differences we observe are far more statistically significant than the differences in the subject pairs analysis. You'll also see that the key grade difference measure can differ a lot between the two methods, at least in some subjects. Subject B, for example, seems a third of a grade easier than subject X based on the subject pairs analysis but a PSM suggests that it is just under a fifth of a grade harder.

* = significant at the p<0.05 level

So, due to the high match rates in a PSM, the results are likely to be more robust than those of a subject pairs analysis. But that is not the whole story, because this conclusion rests upon the quality of your match. When conducting a PSM, it is important to check whether your matching process has rendered all the chosen variables comparable. If this has not happened, you're in trouble, and the central tenet of using matched groups of candidates to infer the standard of subjects falls down you're not comparing similar students anymore.

Three of the subjects compared with subject X in the table above are emboldened in the PSM, the matched candidates for each of these subjects had significantly different mean GCSE scores to the candidates they were matched with in subject X. This meant that for these particular subject matches, we couldn't rely on the results the PSM gave us, because the most important variable to match on (prior attainment) simply wasn't equivalent in each group. By comparison, in the subject pairs analysis, the mean GCSE scores in each pair of subjects were identical, as exactly the same students were used in each.

Using PSM rather than subject pairs to investigate inter-subject comparability is not going to magically solve the perennial debate as to whether an A in one subject is of equal value to an A in any other. But it does offer an alternative approach that seems to be more robust, and crucially, can be used to compare low-entry or disparate subjects, where small (or biased) samples hamper other methods. Here in CERP, we are tinkering away at this; if the quality of the match can be improved, the method can be used for much more than just comparing subject standards but that’s a story for another blog.

Ben Smith

This analysis was performed using the 'Matching' package for R. Those interested might also wish to investigate the 'MatchIt' package, which offers slightly different options for the matching procedure.

Keywords

Related content

About our blog

Discover more about the work our researchers are doing to help improve and develop our assessments, expertise and resources.

Share this page

Connect with us

Email: research@aqa.org.uk

Work with us to advance education and enable students and teachers to reach their potential.