Who should mark our science exams?

By William Pointer

Published 27 Mar 2014

Ensuring that exams are reliably marked is a key concern for AQA, and a real interest for me. Others seem to agree. The quality of marking of high stakes examinations, such as GCSEs, seems to hit the headlines every year1.

GCSE Science

Most AQA science exams test only one scientific discipline at a time, but there are some exams, such as AQA’s GCSE Science A, that assess biology, chemistry and physics in a single paper. In these exams, the markers mark items across all three subjects. This raises an important question: does this reduce the reliability of their marking?

The research literature suggests that a marker’s highest level of education is a better predictor for marking reliability than subject expertise2, 3. Couple this with the fact that the examiners have all opted to mark a combined exam, and it would seem that there is unlikely to be a problem. But it would be good to have some empirical evidence to support this position.

The seeds of an idea

AQA uses ‘seeds’ to monitor the quality of marking in GCSE science exams. Seeds are responses that are pre-marked by senior examiners. During a normal examiner’s marking, seeds will be included at a given interval. This allows AQA to monitor examiners’ marking in real time by comparing the mark awarded by the examiner to the seed mark.

The main purpose of seeding is to stop errant examiners so that they can be retrained. However, a neat by-product of the seeding process is a rich dataset of mark-remark information; ideal for my investigation of marking consistency in GCSE Science A.

The research

In general, I found the quality of GCSE Science A marking was high. Indeed, it seemed comparable to marking in other units that assessed just one science discipline. This provided some evidence that examiners were not struggling to mark outside their specialism. So I focused my attention on the 125 hardest-to-mark seeds to see if I could tease out any differences between the groups of specialists.

I used the partial credit model4 to describe the reliability of the examiner and the difficulty of marking the seed. I took the results from this model to see if examiners were equally reliable when marking each science discipline.

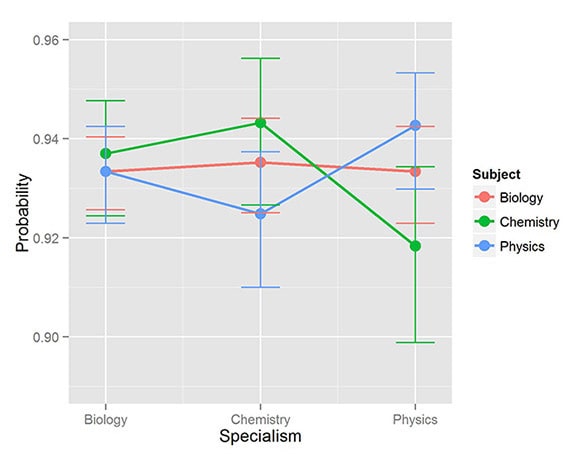

The figure below shows the probability that each group of examiners will mark an item of average difficulty correctly, along with the associated 95% confidence intervals. The figure shows that the probability that the biologists will mark the response correctly is around 93% regardless of what subject the item is assessing. There is more variability in the results for the chemists and physicists, but all the results fall within overlapping confidence intervals.

Probability of marking an item of average difficulty correctly

It is worth remembering that these probabilities relate to the hardest-to-mark seeds and so are likely to exaggerate any differences between the subjects. So it’s a reassuring story: GCSE science markers appear equally well suited to mark biology, chemistry and physics.

It is worth remembering that these probabilities relate to the hardest-to-mark seeds and so are likely to exaggerate any differences between the subjects. So it’s a reassuring story: GCSE science markers appear equally well suited to mark biology, chemistry and physics.

William Pointer

References Jordan, C. (2014, January 4). We need to end the annual exam marking scandal. The Telegraph. Retrieved February 11, 2014, from http://www.telegraph.co.uk/education/educationopinion/10550133/We-need-to-end-the-annual-exam-marking-scandal.html Suto, I., Nadas, R., & Bell, J. (2011). Who should mark what? A study of factors affecting marking accuracy in a biology examination. Research Papers in Education, 26(1), 21–52. Meadows, M. & Billington, L. (2010). The effect of marker background and training on the quality of marking in GCSE English. Manchester, UK: AQA Centre for Education Research and Policy. Masters, G. (1982). A Rasch model for partial credit scoring. Psychometrika, 47, 149–174.

Keywords

Related content

About our blog

Discover more about the work our researchers are doing to help improve and develop our assessments, expertise and resources.

Share this page

Connect with us

Email: research@aqa.org.uk

Work with us to advance education and enable students and teachers to reach their potential.